The Talent System Is Breaking: AI, Layoffs, and the Quiet Redesign of Power

- Demetrius Thornton

- 4 days ago

- 9 min read

Updated: 3 days ago

Why the GC and CHRO partnership is critical to building a new talent operating system under AI, mass layoffs, and unstable legal guardrails.

By Demetrius Thornton

Imagine a high performing employee who has done everything the company asked of them: strong ratings, stretch assignments, and positive feedback. One morning, they join an All Hands call and learn that their role has been eliminated as part of a restructuring. AI, automation, and “efficiency” are part of the explanation. Their performance did not protect them; the role they occupied no longer fits the model. This experience is becoming more common as organizations face disruptive changes.

Imagine a Black professional in a southern state, building a career at a company whose public values emphasize diversity and inclusion. Yet, voting rights in their state have narrowed, directly affecting their life outside work. Within the organization, they may remain high performers, but when spreadsheets come out and hard choices are made, they are not simply employees; they are people whose civic rights and protections have been reduced, both in the wider society and, too often, within corporate systems.

In both scenarios, performance alone is not the core issue. The real question becomes whether the current talent system meaningfully protects, recognizes, or even sees these individuals. As AI, cost cutting, and shifting legal guardrails collide, companies must grapple with how their stated values hold up, who truly holds power, and how that power is defined.

Early in my career, a senior leader gave me a piece of advice I have never forgotten: “Always take a partner.” Before you make a big people decision, do not sit in judgment alone. Get feedback. Do not carry the risk by yourself. In the line of work I chose, talent strategy, succession, and organizational change, that partner has almost always been Legal. Over the years, I learned that when HR and the General Counsel walk into a problem together, the company makes better people decisions. When they do not, the organization almost always pays for it later.

Today, this advice points to a bigger challenge: when AI, layoffs, and unpredictable legal shifts converge, it reveals that the legacy talent system—its ratings, pipelines, and succession—is not built for such volatility. Success depends on consciously redesigning it. The GC-CHRO partnership is key to writing the next version, rather than letting outdated models prevail.

This is not a “meritocracy” problem. It is an operating model problem.

When performance is necessary but not sufficient

We are learning in real time that you can be excellent in a role your company no longer intends to protect. Performance is necessary, but it is no longer sufficient. The deeper question has become: what kind of role is this, and what kind of power does it hold when the spreadsheet comes out?

Development timelines are less reliable. Talent plans that call someone "ready in three years" assume the role and business model will last that long, but AI is already changing that. Declaring someone “promotable in one to two years” doesn’t guarantee that the role will even exist. The safest place for people is not just being on a list, but in roles and pathways that matter for how the company will operate in an AI-shaped future. Most organizations are experimenting with AI even as they are still figuring out how much to invest, how to govern it, and what risks they are really taking. Tools are being switched on in recruiting, performance management, workforce planning, and internal mobility long before leadership fully understands their second and third order effects.

At the same time, the external rules that once acted as guardrails, civil rights enforcement posture, guidance around AI in hiring, and the broader ecosystem of labor and voting rights are in flux. The net result is a kind of organized uncertainty. No one can say with confidence which roles will be safe in three years, how AI will reshape who gets seen and advanced, or how often an external referee will step in when something goes wrong.

In this environment, incremental adjustments are insufficient. Companies must adopt a fundamentally new operating model that explicitly addresses the allocation of power in hiring, promotions, and succession as the main driver of fairness and adaptability.

All talent decisions are power decisions

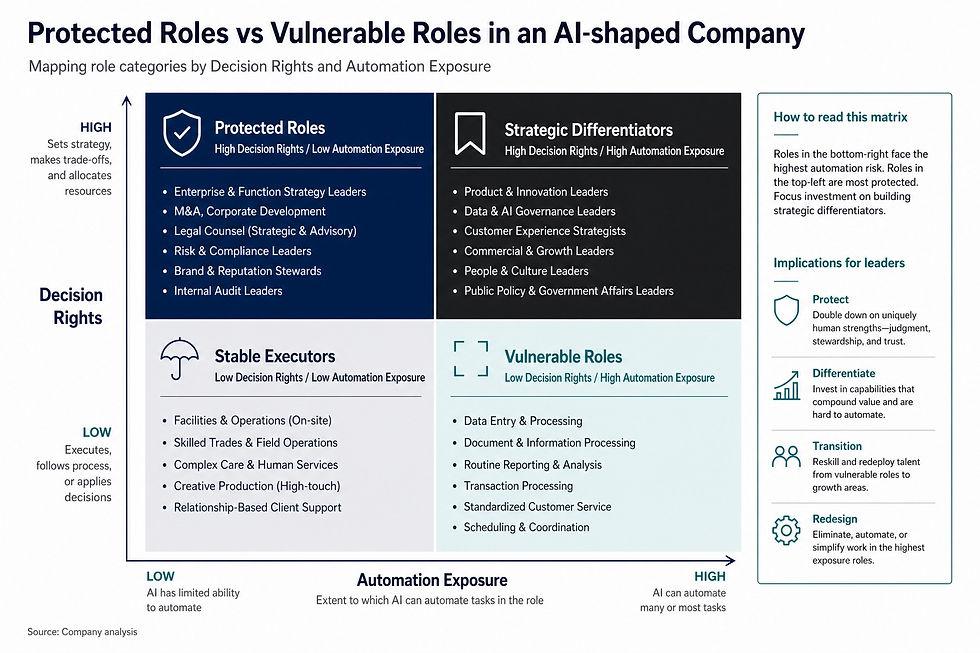

We do not like to use the word power in corporate talent conversations. We talk about performance, potential, culture, and engagement. But beneath all of that, the core question is: who holds power here, and how are we deciding that? By power, I mean something very specific: who has decision rights over money, risk, and people. Who decides what gets funded, what gets cut, who gets staffed, and who gets approved. Who has the authority to say yes or no to another person’s livelihood. Organizations generally know which roles hold true decision power—P&L owners, strategy gatekeepers, and those who approve hiring and promotions. Yet most have not explicitly mapped these positions or evaluated whether their selection systems make sense in a volatile, AI-driven world.

At the other end of the spectrum are roles that absorb decisions made elsewhere: customer support, back office, repeatable production, administrative and operational work, some analyst and content roles, and certain frontline jobs. These roles are critical, but they tend to be easier to cut, automate, or outsource. They are also the roles where people often feel the impact first when AI or cost cutting shows up.

When you overlay AI, mass layoffs, and shifting external guardrails on top of this landscape, you get a simple but uncomfortable reality:

People in roles with durable power have more room to adapt.

People in roles that can be eliminated or automated feel the impact of every shock, regardless of performance.

If the company has never consciously defined power, mapped where it sits, or redesigned its talent systems around that truth, it is essentially flying blind.

For many Black and Brown professionals, women, and first generation talent, this is not an abstract exercise; it is a visible pattern: their performance rises, but their proximity to real decision rights does not. In an era when AI tools can replicate or amplify existing racial and gender biases in hiring and resume screening, ignoring the power question is not neutral; it compounds risk for the same communities that have historically been excluded. That is the opportunity for GCs and CHROs right now.

Why GC and CHRO have never mattered more

When AI is being wired into hiring and promotion systems, when large job cuts are being justified in the name of efficiency, and when external guardrails are less predictable, two questions rise above the rest:

Do we actually understand how our systems are allocating power?

Are we comfortable being accountable for that when the environment shifts again?

HR and Legal must take joint accountability for these questions. If you are in a GC or CHRO role, take action: schedule regular strategic sessions focused on how your talent systems shape power, establish clear goals for transparency with your executive team and board, and ensure ongoing review of your decisions to adapt as conditions change.

Their shared role can be broken into three key areas.

1. Define power for your company, not just promotability timelines

If you can name your values, you can name what power means in your context. Together, GC and CHRO should lead a simple, honest exercise: initiate a focused session to map power, set milestones to track progress, and clearly communicate the findings to leadership.

Which roles have formal decision rights over capital, strategy, and people?

Which roles are structurally designed to execute and absorb decisions made elsewhere?

What are the pathways, if any, for people to move from one category into the other?

Most companies can tell you who is “ready now,” who is “ready in two years,” and who is somewhere in the pipeline. Far fewer can tell you something more important: how this company actually defines power. In a world where roles, structures, and even business models do not hold still, “ready in three years” is only meaningful if you are clear about ready for what, and whether those roles will still exist and still matter when the time comes. If nearly everyone in power looks the same, sounds the same, and comes through the same channels, your company has learned to read a very specific kind of person as naturally fit for power. That is not a meritocracy problem. It is an operating model problem.

2. Treat AI as an organ in the talent system, not a gadget

Right now, many companies are deploying AI as if it were a series of tools: a resume screener here, a ranking algorithm there, an internal marketplace over here. From the perspective of power, these are organs in the body of your talent system.

If a resume screening model systematically prefers certain profiles or backgrounds, it is making power decisions about who even gets in the door. If an internal AI marketplace mostly surfaces a narrow group of people for stretch assignments or leadership programs, it is making power decisions about who gets visibility. If a performance algorithm downgrades certain patterns of work and upgrades others, it is making power decisions about who is seen as promotable.

GC and CHRO together should be asking:

Where is AI already influencing visibility, ranking, or selection?

What assumptions are those systems learning from our history?

What safeguards and overrides exist when something does not look right?

3. Reset manager accountability around power

Most of the DEI era tried to move numbers through programs, messaging, and representation metrics. The work that remains is quieter and more structural: who gets opportunities that lead to power, and how are we holding managers accountable for that?

Here, the GC and CHRO partnership can do something practical:

Define manager “platform” responsibilities: a manager’s job is not just to hit targets; it is to run a fair opportunity platform, who gets stretch work, who gets coached, who gets visibility, and who gets put forward for roles that hold power.

Tie manager evaluation to how they run that platform, using process metrics: how many direct reports have development plans, how often they discuss career paths, how transparently they allocate high profile work, how they use or override AI recommendations.

Link a slice of executive compensation to process quality: not “hit this demographic target,” but “follow these documented, reviewable decision processes for hiring, promotion, and succession.”

When managers and executives know they will be evaluated not just on results but on how they allocate opportunities that lead to power, the internal operating system starts to change.

Power as a design choice

If the problem is the operating model, not abstract fairness, the work changes.

You can start by treating power as a design choice:

Map the protected roles. Look at recent cycles of cuts and reorganizations. Which roles stayed intact or even gained resources? Which ones were repeatedly “simplified”?

Map the real decision rights. List out the decisions that truly move the company: capital allocation, AI strategy, platform bets, and major risk calls. Who is in the room when those are made? Who is never there, no matter how senior on paper?

Overlay identity and class. Look at who tends to occupy those protected roles and decision rights. Do they all look similar in ways your meritocracy story does not explain?

Audit your “ready in X years” lists. How many of the people labeled “ready” are pointed at roles that might not exist, or that are structurally peripheral to how power actually moves here?

Decide what you want to be true. Based on everything above, what would it look like to treat your stated values as operating model constraints, not slogans?

None of this requires perfection. It does require letting go of the comfort of thinking that if your top performers look similar, it must be because the process was pure.

The choice inside the noise

There is a lot happening outside your walls that you cannot control: market volatility, AI hype cycles, political noise, and changing enforcement. Inside your walls, you still make a choice. You can let that instability write your internal rules by default, clinging to an old meritocracy story while your operating model quietly reproduces the same narrow circle of power. Or you can decide what you will stand for in how power is granted, shared, and renewed, and align your structures, your AI tools, your promotions, and your protections to that choice.

In an AI shaped, volatile landscape, the companies that will keep trust are not the ones that talk most about fairness, but the ones that treat power as an explicit design decision and take responsibility for how they allocate it.

Demetrius D. Thornton is a talent, workforce, and career strategy executive, former Senior Vice President and Head of Talent at Madison Square Garden Entertainment, and Deputy Chief of Staff in New York City government. He is an adjunct professor at New York University and Founder and CEO of Limmor Group. He is also the creator of the SCOPE framework and author of American Trickery.

Comments